Welcome to Your Brain (11 page)

Read Welcome to Your Brain Online

Authors: Sam Wang,Sandra Aamodt

Tags: #Neurophysiology-Popular works., #Brain-Popular works

wearing earplugs to keep the sound level down. A rock concert clocks in at the same noise

intensity as a chainsaw—and experts recommend limiting exposure to those sounds to no

more than one minute at a time. If you don’t want to stop going to concerts, be aware that

noise-induced damage is cumulative, so the more noise you experience over your life, the

sooner you’ll start to lose your hearing.

Noise causes hearing loss by damaging hair cells, which detect sounds in the inner ear.

As discussed above, hair cells have a set of thin fibers called the hair bundle extending

from their surface that move in response to sound vibrations. If the hair bundle moves too

much, the fibers can tear, and that hair cell will no longer be able to detect sound. The hair

cells that respond to high-pitched sounds (like a whistle) are most vulnerable and tend to be

lost earlier than the hair cells that respond to low-pitched sounds (like a foghorn). That’s

why noise-related hearing loss tends to begin with difficulty in hearing high-pitched sounds.

Sounds at this frequency are especially critical for understanding speech.

Ear infections are another common cause of hearing loss, so it’s important to get them

diagnosed and treated. Three out of four children get ear infections, and parents should

watch for symptoms, which include tugging at the ears, balance or hearing problems,

difficulty sleeping, and fluid draining from the ears.

Differences in the timing and intensity of sounds reaching your right and left ears help your brain

to figure out where a given sound came from. Sounds coming from straight ahead of you (or straight

behind you) arrive at your left and right ears at exactly the same time. Sounds coming from your right

reach your right ear before they reach your left ear, and so on. Similarly, sounds (at least high-pitched

sounds) coming from the right tend to be a little louder in your right ear; their intensity is reduced in

your left ear because your head is in the way. (Low-pitched sounds can go over and around your

head.) You use timing differences between your ears to localize low- and medium-pitched sounds and

use loudness differences between your ears to localize high sounds.

Cocktail party: A gathering held to enable forty people to talk about themselves at the

same time. The man who remains after the liquor is gone is the host.

—Fred Allen

When it’s working to identify the content of a sound, the brain is specially tuned to detect signals

that are important for behavior. Many higher brain areas respond best to complex sounds, which

range from particular combinations of frequencies to the order of sounds in time to specific

communication signals. Almost all animals have neurons that are specialized to detect sound signals

that are important to them, like song for birds or echoes for bats. (Bats use a type of sonar to navigate

by bouncing sounds off of objects and judging how quickly they come back.) In humans, an especially

important feature of sound interpretation is the recognition of speech, and several areas of the brain

are devoted to this process.

Practical tip: Improving hearing with artificial ears

Hearing aids, which make sounds louder as they enter the ear, do not help patients

whose deafness results from damage to the sound-sensing hair cells in the cochlea.

However, many of these patients can benefit from a cochlear implant, which is an

electronic device that is surgically implanted inside the ear. It picks up sounds using a

microphone placed in the outer ear, then stimulates the auditory nerve, which sends sound

information from the ear to the brain. About sixty thousand people around the world have a

cochlear implant.

Compared to normal hearing, which uses fifteen thousand hair cells to sense sound

information, cochlear implants are very crude devices, producing only a small number of

different signals. This means that patients with these implants initially hear odd sounds that

are nothing like those associated with normal hearing.

Fortunately, the brain is very smart about learning to interpret electrical stimulation

correctly. It can take months to learn to understand what these signals mean, but about half

of the patients eventually learn to discriminate speech without lipreading and can even talk

on the phone. Many others find that their ability to read lips is improved by the extra

information provided by their cochlear implants, although a few patients never learn to

interpret the new signals and don’t find the implants helpful at all. Children more than two

years old can also receive implants and seem to do better at learning to use this new source

of sound information than adults do, probably because the brain’s ability to learn is

strongest in childhood (see

Chapter 11)

.

Practical tip: How to hear better on your cell phone in a loud room

Talking on your cell phone in a noisy place is often a pain. If you’re like us, you’ve

probably tried to improve your ability to hear by putting your finger in your other ear but

found that it doesn’t work very well.

Don’t give up. There is a way to hear better by using your brain’s abilities. Counter-

intuitively, the way to do it is to cover the mouthpiece. You will hear just as much noise

around you, but you’ll be able to hear your friend better. Try it. It works!

How can this be? The reason this trick works (and it will, on most normal phones,

including cell phones) is that it takes advantage of your brain’s ability to separate different

signals from each other. It’s a skill you often use in crowded and confusing situations; one

name for it is the “cocktail party effect.”

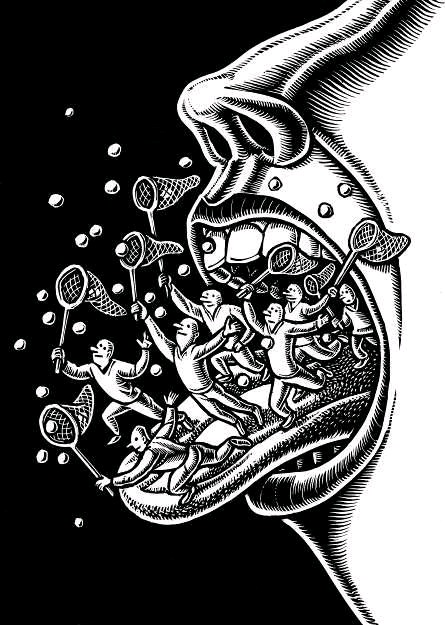

In a party, you often have to make out one voice and separate it from the others. But

voices come from different directions and sound different from one another—high, low,

nasal, baritone, the works. As it turns out, your brain shines in this situation. The simplest

sketch of what your brain is doing looks like this:

voice » left ear » BRAIN « right ear « room noise

More complicated situations come up, such as multiple voices coming from different

directions. The point is that brains are very good at what scientists call the source

separation problem. This is a hard problem for most electronic circuitry. Distinguishing

voices from each other is a feat that communications technology cannot replicate. But your

brain does it effortlessly.

Enter your telephone. The phone makes the brain’s task harder by feeding sounds from

the room you’re in through its circuitry and mixing them with the signal it gets from the

other phone. So you get a situation that looks like this:

voice plus distorted room noise » left ear » BRAIN « right ear « room noise

This is a harder problem for your brain to solve because now your friend’s transmitted

voice and the room noise are both tinny and mixed together in one source. That’s hard to

unmix. By covering the mouthpiece, you can stop the mixing from happening and re-create

the live cocktail party situation.

Of course, that brings up a new question: why do telephones do this in the first place?

The reason is that decades ago, engineers found that mixing the caller’s own voice with the

received signal gives more of a feeling of talking live. The mixing of both voices—which

is called “full duplex” by phone geeks—does do that, but in cases where the caller is in a

noisy room, it makes the signal harder to hear. Until phone signals are as clear as live

conversation, we are stuck with this problem—which you can now fix using the power of

your brain. As the phone ad says, “Can you hear me now?”

Your brain changes its ability to recognize certain sounds based on your experiences with

hearing. For instance, young children can recognize the sounds of all the languages of the world, but at

around eighteen months of age, they start to lose the ability to distinguish sounds that are not used in

their own language. This is why the English

r

and

l

sound the same to Japanese speakers, for instance.

In Japanese, there is no distinction between these sounds.

You might guess that people just forget distinctions between sounds they haven’t practiced, but

that’s not it. Electrical recordings from the brains of babies (made by putting electrodes on their skin)

show that their brains are actually changing as they learn about the sounds of their native language. As

babies become toddlers, their brains respond more to the sounds of their native language and less to

other sounds.

Once this process is complete, the brain automatically places all the speech sounds that it hears

into its familiar categories. For instance, your brain has a model of the perfect sound of the vowel

o

—and all the sounds that are close enough to that sound are heard as being the same, even though

they may be composed of different frequencies and intensities.

As long as you’re not trying to learn a new language, this specialization for your native language

is useful, since it allows you to understand a variety of speakers in many noise conditions. The same

word produced by two different speakers can contain very different frequencies and intensities, but

your brain hears the sounds as being more alike than they really are, which makes the words easier to

recognize. Speech recognition software, on the other hand, requires a quiet environment and has

difficulty understanding speech produced by more than one person because it relies on the simple

physical properties of speech sounds. This is another way that the brain does its job better than a

computer. Personally, we’re not going to be impressed with computers until they start creating their

own languages and cultures.

Accounting for Taste (and Smell)

Animals are among the most sophisticated chemical detection machines in the world. We are able to

distinguish thousands of smells, including (to name a few) baking bread, freshly washed hair, orange

peels, cedar closets, chicken soup, and a New Jersey Turnpike rest stop in summer.

We are able to detect all these smells because our noses contain a vast array of molecules that

bind to the chemicals that make up smells. Each of these molecules, called receptors, has its own

preferences for which chemicals it can interact with. The receptors are made of proteins and sit in

your olfactory epithelium, a membrane on the inside surface of your nose. There are hundreds of types

of olfactory receptors, and any smell may activate up to dozens of them at once. When activated, these

receptors send smell information along nerve fibers in the form of electrical impulses. Each nerve

fiber has exactly one type of receptor, and as a result smell information is carried by thousands of

“labeled lines” that go into your brain. A particular smell triggers activity in a combination of fibers.

Your brain makes sense of these labeled lines by examining these patterns of activity.

Did you know? A seizure of the nose, or sneezing at the sun

As many as one in four people in the U.S. sneeze when they look into bright light. This

photic sneeze reflex appears to serve no biological purpose whatsoever. Why would we

have such a reflex, and how does it work?

The basic function of a sneeze is fairly obvious. It expels substances or objects that are

irritating your airways. Unlike coughs, sneezes are stereotyped actions, meaning that each